Best AI for Trading? ChatGPT vs Claude vs Gemini vs Grok (Backtested Results)

I gave ChatGPT, Claude, Gemini, and Grok the same prompt to build a Pine Script strategy. Here's which one actually made money in the backtest.

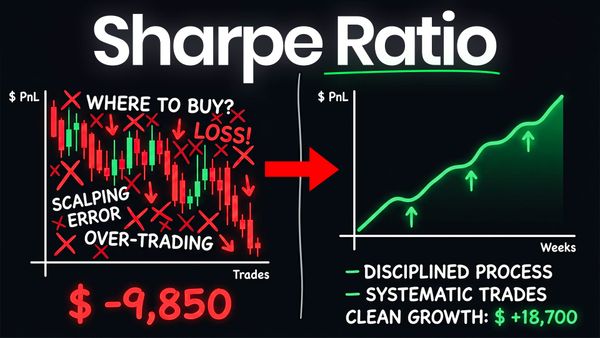

Every other day there's a new video claiming AI can build profitable trading strategies — but almost none of them actually test the strategies in real market conditions. So I ran the experiment myself. I gave four of the most popular AI models — ChatGPT, Claude, Gemini, and Grok — the exact same prompt to build a Pine Script trading strategy from scratch, then backtested every single one across Bitcoin, Ethereum, and Solana to see which AI generated something that actually made money. The results were not what I expected, and there's one critical step at the end that determines whether any of these strategies are worth real capital.

Key Takeaways

- Four leading AI models (ChatGPT, Claude, Gemini, Grok) were given an identical prompt to design a Pine Script trading strategy for crypto on the 1-4 hour timeframe.

- Each strategy was backtested under the same conditions — a $100,000 account using $10,000 per trade — across Bitcoin, ETH, Solana, SPY, and QQQ on multiple timeframes.

- Claude produced the strongest overall strategy with the largest sample size of trades, while Gemini generated the weakest results across nearly every asset and timeframe.

- ChatGPT came in a close second to Claude, with Grok landing third and Gemini a clear last.

- Backtesting alone is not enough — forward testing is the only way to confirm a strategy actually trades the way the backtest says it does.

The Experiment: Same Prompt, Four AI Models

To make this a fair fight, every model received the exact same prompt with the exact same constraints. I asked for a complete Pine Script strategy optimized for crypto on the 1-4 hour timeframe — that hybrid zone between day trading and swing trading where fees stop eating up the equity curve as aggressively. I let each AI choose its own indicators, signals, and order logic rather than hand-holding them toward a specific approach, and I explicitly hinted that I did not want a strategy that repaints.

Repainting is one of the most common ways AI-generated strategies look incredible in a backtest and then fall apart in live trading. It happens when alerts and signals fire mid-bar based on data that hasn't been confirmed yet, then "repaint" once the bar closes — making the backtest look cleaner than reality. Avoiding it was non-negotiable.

For the backtest itself, I gave each strategy a $100,000 account with a fixed $10,000 per trade. There are a million ways to set this up, but a fixed dollar amount per trade puts every strategy on an even playing field regardless of how aggressive its position sizing logic might be.

How Each AI Approached the Strategy

What stood out immediately was how differently each model interpreted the same instructions. The prompt didn't change, but the output reflected the underlying training and reasoning style of each model.

ChatGPT built a Trend Continuation Breakout Strategy. It looks for markets already in strong bullish or bearish regimes, waits for a period of volatility compression, then enters on a breakout with momentum confirmation. The idea is to avoid random chop and only enter when price has compressed, pulled back, and started expanding in the direction of the broader trend. ChatGPT required a few rounds of error fixes before the code ran cleanly.

Gemini produced the Volatility Adjusted Mean Reversion (VAMR) Strategy. At its core, it identifies the dominant macro trend and waits for statistically extreme counter-trend moves to enter — buying the dip in an uptrend and selling the rip in a downtrend. Notably, Gemini's code ran on the first try with zero edits required.

Grok delivered the Adaptive SuperTrend Confluence Strategy. It uses the SuperTrend indicator as the core engine for both direction and dynamic trailing stops, then layers simple confluence filters to avoid chop. Grok required one minor fix before the code executed properly.

Claude generated the Adaptive Momentum Breakout — a trend-following breakout system that only fires when three conditions align: higher timeframe trend direction, current timeframe momentum, and a clean Donchian channel break above average volume. Claude self-reported an expected 35-45% win rate with a profit factor in the 1.3 to 1.8 range. The code ran without a single edit needed.

Backtest Results: The Numbers Don't Lie

Once the strategies were coded and run against historical data, the gap between the best and worst performers was significant. Win rate, drawdown, profit factor, and total trade count all told the same story across multiple assets and timeframes.

ChatGPT's strategy performed reasonably well on the daily timeframe — Bitcoin showed roughly 33% returns with a 4.64% drawdown across 98 trades since 2015. The problem was scale. As I dropped down to the 4-hour, 2-hour, and 1-hour timeframes, the strategy started flatlining or losing ground over the past several years. It's profitable, but nowhere near the buy-and-hold for Bitcoin over the same period — which on its own is not a dealbreaker, but the lower timeframes really exposed weakness in trending vs. chop conditions.

Gemini's strategy was the clear underperformer. Across Bitcoin, ETH, SPY, and QQQ, on multiple timeframes, it failed to produce any equity curve I'd be willing to trade. A few of the configurations were technically profitable, but the trade counts were far too low — sometimes under 35 trades over a 10-year span — to draw any meaningful conclusions. Sample size matters, and Gemini didn't have one.

Grok's strategy was an interesting case. The 4-hour ETH backtest showed the best raw returns of any strategy I tested, but almost all of those gains came from a single large trade back in 2017, with long stretches of flatlining since. With only 41-50 trades across the full backtest, the profit factor looked great on paper but the small sample makes it difficult to trust. Better than Gemini, but still not something I'd commit capital to without serious refinement.

Claude's strategy was the standout. On Bitcoin's 4-hour timeframe, it produced 474 trades with roughly 100% returns and a 10% drawdown — the highest trade count of any strategy in the test, which gives the results far more statistical weight. The daily timeframe showed a clean upward-sloping equity curve with 121 trades, 55% win rate, and a 5% drawdown. ETH performance on the 2-hour and 4-hour timeframes was also solid, with consistent up-and-to-the-right equity curves. It's not perfect — performance has flatlined somewhat over the past year or two — but the larger sample size makes it the most credible starting point of the four.

The Winner: Why Claude Came Out on Top

Claude generated the strongest overall result for one core reason: trade count. A strategy that produces 474 trades over a backtest window gives you something you can actually trust. A strategy that produces 35 trades gives you noise. Claude's Adaptive Momentum Breakout had the best combination of meaningful sample size, reasonable drawdown, and consistent performance across multiple assets — particularly Bitcoin and Ethereum on the 2-hour and 4-hour timeframes.

ChatGPT was a close second. The logic was sound, the daily timeframe results were respectable, and with some refinement it could be competitive. But Claude's strategy gave more trades to validate, which is what separates a backtest you can build on from a backtest you should ignore.

Important caveat: none of these strategies were optimized. The Pine Script settings panel for any of them includes dozens of inputs that can dramatically change how the strategy performs. A strong starting point is exactly that — a starting point. The real work is iterating from there.

How to Forward Test Before Going Live

This is the part of the process almost every retail trader skips, and it's the part that matters most. Backtesting tells you how a strategy would have performed against historical data. Forward testing tells you how it actually performs in real time, with current market conditions and real execution. The gap between those two things is where most "profitable" strategies blow up.

The biggest reason that gap exists is intra-bar execution. A backtest can easily show you where an entry and exit "should" have occurred based on data that already exists. But what about a strategy with trailing stops or take-profits that fire mid-bar? In a real market, that intra-bar move might trigger your stop-loss before the bar closes. In the backtest, the strategy might wait for confirmation. Same logic, different fills, completely different equity curve.

The cleanest way to close that gap is to forward test in an automated environment that captures every fill in real time and lets you compare it directly to what your backtest predicted. That's why I run every new strategy through AlphaInsider before risking a dollar. I take the strategy, plug it in, set up the alerts on TradingView with a custom webhook, and let it forward test for a defined period. Then I compare the live fill prices and times to the backtest. If they match, I trust the strategy enough to consider sizing into it. If they diverge, the strategy goes back to the drawing board — because that means the backtest was lying.

I've personally caught strategies that looked phenomenal in backtests but performed atrociously the moment I forward tested them. The order logic was firing off intra-bar data that the backtest could never replicate. Without that forward testing layer, I would have lost real money on something that looked like a winner on paper.

Should You Trust AI to Build Your Trading Strategies?

The honest answer: AI is an excellent collaborator and a terrible decision-maker. Every model in this test produced something usable as a starting point — even Gemini, the worst performer of the group, generated valid Pine Script code that ran cleanly. But every single strategy needed validation, refinement, and forward testing before it could be trusted with real capital. The AI didn't replace the work — it accelerated it.

If you're using AI to skip the process, you're going to lose money. If you're using it to compress weeks of strategy ideation into hours, you're using it correctly. Right now, ChatGPT and Claude are clearly in a different league than Gemini and Grok for this specific use case. Either one is a strong starting point — and from there, the real work begins.

Frequently Asked Questions

Which AI is best for building trading strategies?

Based on this head-to-head test, Claude produced the most robust strategy — primarily because it generated the largest sample size of trades and held up across multiple assets and timeframes. ChatGPT was a close second. That said, results can vary depending on the prompt, asset class, and strategy type, so don't take any single test as gospel. Run your own comparison for the specific market you trade.

Can ChatGPT actually create profitable trading strategies?

Yes — ChatGPT generated a profitable Trend Continuation Breakout strategy in this test, particularly on Bitcoin's daily timeframe. But profitable in a backtest is not the same as profitable in live markets. Every strategy needs to be validated through forward testing before being traded with real capital.

Why did Gemini perform so poorly?

Gemini's Volatility Adjusted Mean Reversion strategy was the worst performer in this test, generating very few trades and underperforming across nearly every asset and timeframe. Mean reversion strategies are notoriously difficult to get right in trending markets like crypto, and the small sample size made it impossible to draw confident conclusions from the few profitable configurations.

What's the difference between backtesting and forward testing?

Backtesting runs a strategy against historical data to see how it would have performed in the past. Forward testing runs the same strategy in real time against current market data, usually in an automated environment, to confirm the edge still exists outside of the historical sample. Forward testing is what catches strategies that look great on paper but fail in live execution due to repainting, intra-bar order logic, or curve fitting.

Is it safe to trade an AI-generated strategy with real money?

Not without doing your own due diligence. AI-generated strategies should be treated like any other strategy you didn't build yourself — backtested rigorously, forward tested in real time, and stress tested across multiple market conditions and assets before being sized up.

What is repainting and why does it matter?

Repainting happens when a strategy's signals fire mid-bar based on data that hasn't been confirmed yet, then change once the bar closes. This makes a backtest look better than reality because the strategy appears to "know" what's about to happen. A repainting strategy will look fantastic in TradingView and then fail miserably in live trading. Avoiding repainting is one of the most important constraints to bake into your prompt when asking AI to generate a strategy.

Final Thoughts

The takeaway from this experiment isn't that one AI is universally better — it's that AI is now a legitimate part of the strategy development workflow, and treating it like a magic profit machine is a fast way to lose money. Use it to accelerate your process, not replace it. Backtest everything. Forward test before you risk real capital. And keep your own judgment in the driver's seat.